SAR Robot for Gerontology

Description

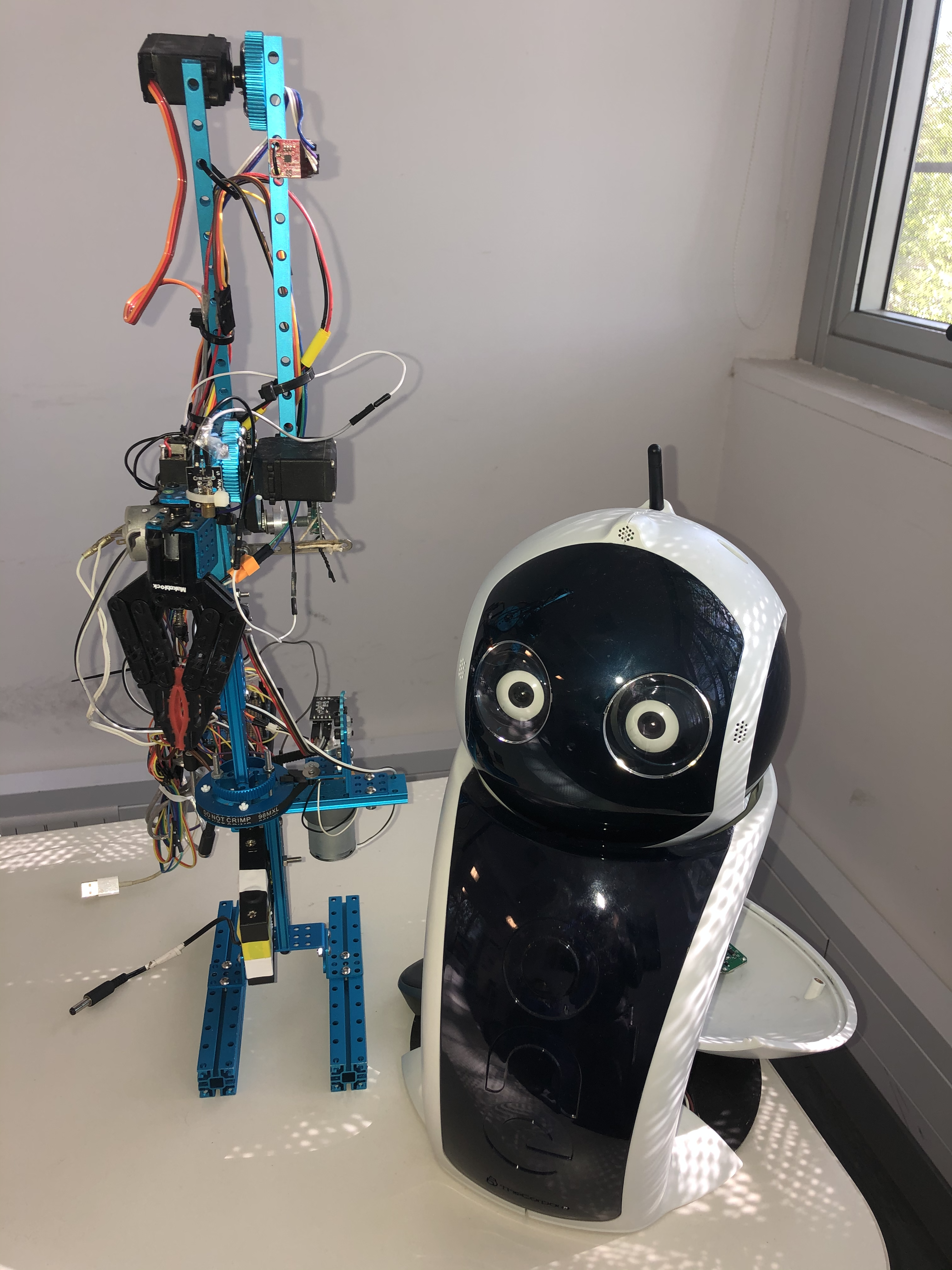

This is a Social Assistive Robot built on top of the Open Hardware Q-BO project by TheCorpora based on RPi and Arduino. The robot hardware has a SBC RPi and a microcontroller based on an Arduino port. It has two capacitive sensors on each side of the face that reacts to touch, a LED array for the mouth, and a multifrequency LED for the nose. It can pan and tilt its head using wonderful Dynamixel servos. It has two speakers and two microphones. It has an Speech-To-Text STT and an opposite Text-To-Speech TTS: a traditional metallic synthetizer. Tooly has already both, and they work fairly well. Finally it has two low resolutions USB Cameras on each eye calibrated for 3D depth and programmed to follow faces. Additionally, the robot has an external basic 7-DOF arm with a payload of 0.5 kg, that can be controlled from the serial bus of the RaspberryPi.

Project

This project involves the development of a Embodied Conversational Agent (ECA), a SAR robot to assist in gerontology settings. It can be used to research to build a workable product for elderly assistance, either from the point of view of caregivers or from homecare therapy. We implemented the ECA using Meta Llama2 model, which is customized to act like a robot companion for elderly care.

Scope

We need to fix several issues of the robot regarding the autonomy and iterate with Llama3 in Spanish.

- Bibliograpy:

Code